ITP

Sustainable Energy – kinetics

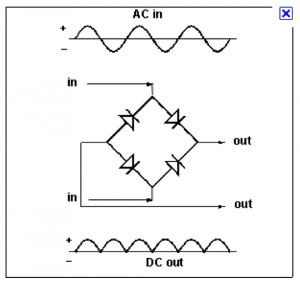

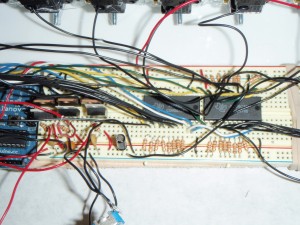

Using this circuit I’m trying to light up an LED by spinning the wheel on my car – HOWEVER, it’s not working. I think I might be doing something wrong with the four diodes I’m using to try to make a full – wave rectifier. I shall later use a multimeter to see where I’m going wrong (as well as calculate the amperage and voltage I’m generating from spinning the wheel)…as well as try it with a single diode as a half-wave rectifier.

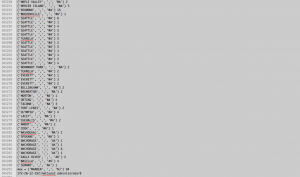

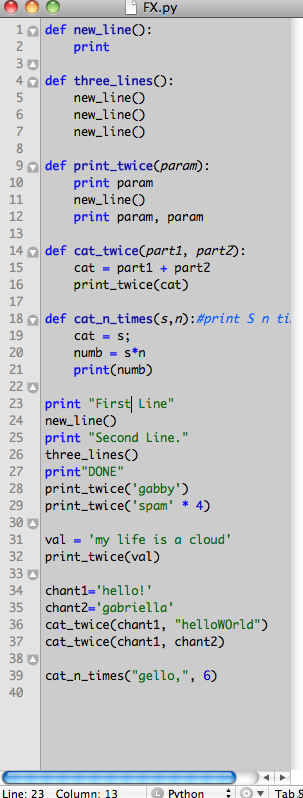

more python work

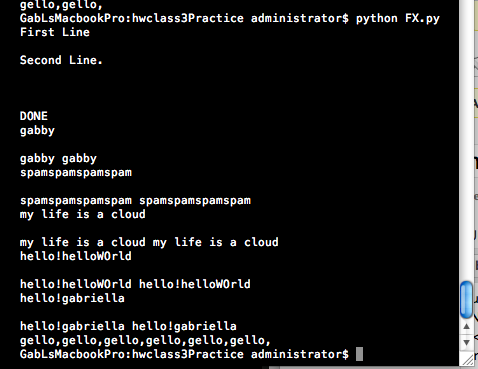

Just fooling around, and trying to learn Python: Super trivial stuff, I’m mostly putting it here as a reference to myself, but hopefully I’ll have something more complex, soon

INPUT:

Two super awesome machines

The assignment: Pick one impressive machine/mechanism you consider art, and one you consider practical/functional. They don’t have to be electromechanical, but they have to move. Post pictures/videos on your blog and your response to the pieces.

“ART”

Alan Rath, autonomously mobile robots: Rover, I Want, I like to watch

I don’t know too much about Alan Rath’s work, but I do find it impressive. The kinetics of the robotic creatures are wonderfully inventive, and the machines move smoothly, sensibly and organically. There is an eerie unification of machine and organism, and I read it as a commentary or investigation on the relationship of humans and machines / computers.

practical / functional

My favorite mechanism / power tool:

the Stihl 261 chainsaw

The mechanics of it: A chainsaw (or chain saw) is a portable mechanical saw, powered by electricity, compressed air, hydraulic power, using a two-stroke engine. This means that in two strokes of the piston, it goes through the cycle of intake, crankcase compression, transfer/exhaust, compression, power (this is called the Ottos cycle).

Here are some images showing how a two-stroke engine works:

My pics :

LEGOS

Natalie and I built a simple machine out of legos using the technic set. Then we added a remote control. Simply, it is a remote control car.

Rube Goldberg machine

I worked with Christine, Ivana, and Hana on the following first assignment, which was captured on the video above:

Build a machine that cracks an egg

Your machine should be loaded with an egg. It should be reasonably quick to reload your machine with a new egg between runs (no disassembly of the machine). Acceptable triggers to start the machine include (for example) a button press, pulling/pushing a lever, or yanking a string. Your machine should consist of at least 5 energy transfers (steps). After the initial start no human intervention is allowed. You may use any materials you can find/make/buy. Each step should be unique and contribute to the goal. Basically this means you can’t, for example, have some rolling ball hit 5 pins on its way down a ramp and have those actions count as steps (lame). The majority of the egg and NO MORE than 1/2 of the shell can end up in the final receptacle to get full credit.

You will get 2 attempts. Your machine can take no longer than 5 minutes to complete the task from the time you initiate movement.

Mechanisms and Things that move

I can’t believe I got in??

First Assignment HW

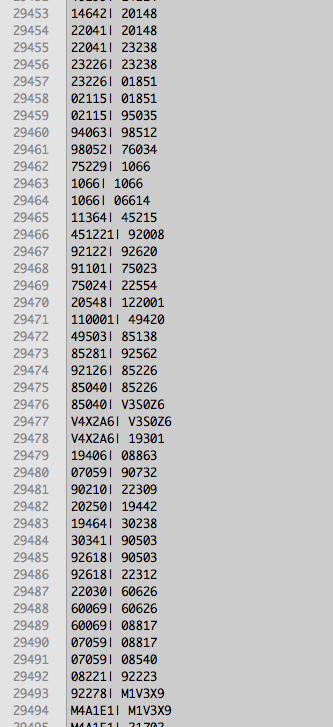

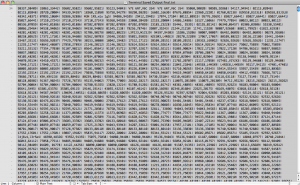

I made a simple program that reads in zip code data that pertains to carpool requests throughout the US and outputs the city and state name. The data I input (which I have from a wiki of a friend, and it contains about 40000 pairs of zips) is formatted as so: | zip, zip|

input:

19446,19477| 19446,19477| 21224,20019| 90840,92660| 02114,02132| 17047,17128| 94612,94903| 94612,94903| 94612,94903| 62034,62035| 62034,62035| 94110,94403| 94110,94403| 07306,07059|

The first zip code is the origin point, the second is the destination. Lastly, I print out the location that occurred the most number of times, and how many times that location occurred.

Questions I have: How can I embed it online?

Next: Clean up the output. Get the lats / longs from the zips, and export it to a map of sorts.

Check out the is the python code on the next page:

(more…)

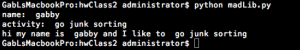

my first (and most banal) madlib – beginning of assignment one

my first stab at learning Python:

hi my name is gabby and i like to go junk sorting

madLib.py document:

name=raw_input(“name: “);

activity = raw_input(“activity: “)

print “hi my name is “, name, “and I like to “, activity

First assignment

There were many similar iterations made, but I’d like to give it a waggy tail

I would eventually like to make an animation of the bodily systems, and make animations of life systems and reoccuring patterns in nature.

I have to figure out why this excites me, but it does:

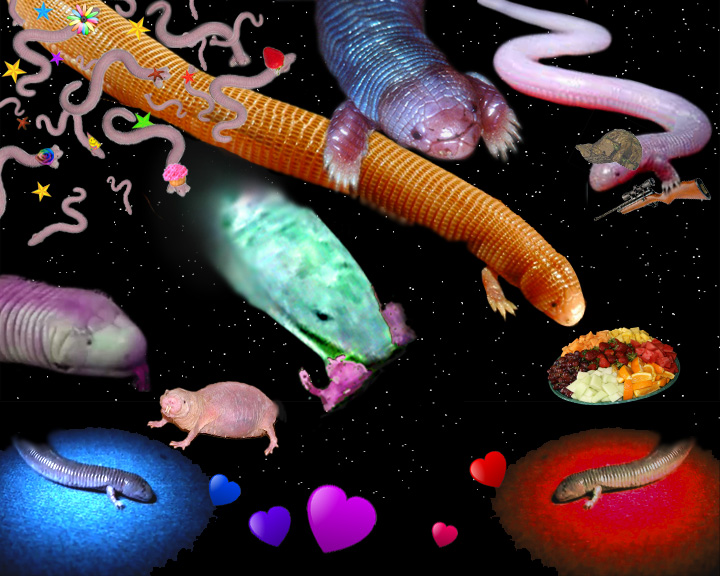

Animals and a portrait of one

This is a portrait of the Ajolote, or the Mexican mole worm. This lizard is of the Amphisbaenia (legless) suborder of squamates, or scaled lizards. —qualities:

(more…)

Proj. Dev. studio – project idea

An exploratory project about timing and computers, specifically related to sound:

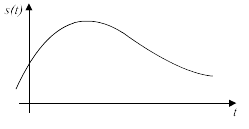

I would like to explore sound, how it is represented with data, and demonstrate how is produced digitally by making a physical interface with which people can manipulate the sample rate of a signal.

Bit rate = (sampling rate) x (bit depth) x (number of channels)

Sampling rate: the number of samples per second (or per other unit) taken from a continuous signal to make a discrete signal.

Audio bit depth: the number of bits of information recorded for each sample. Bit depth directly corresponds to the resolution of each sample in a set of digital audio data. Common examples of bit depth include CD quality audio, which is recorded at 16 bits, and DVD-Audio, which can support up to 24-bit audio.

Bit rate the bits of data transferred and received each second

By making a physical interface that people can interact with, I want to allow people to be responsible for sample rate (I was thinking they could push a button over and over rapidly, but no matter what, the sample rate will be WAY TOO SLOOOOW). Perhaps a lever would work. Cell phones / walkie talkie transmission uses a sample rate of 8,000 Hz. I would like to design a way that two people can interact, or one person can interact with a device / a computer and manipulate the timing that a computer asks for incoming data.

This next part maybe is less hashed out:

I would also like to make an interface that allows people to change the resolution of recorded samples, and see how it affects the audio quality. I would like to make a display using light and/or color to show the relationship of difference in resolution possibilities with different bit depths.

FECPW – What I want to built (class 1)d

I have already started this using Processing, but I’d like to build a this as a dynamic site, possibly using googlemaps:

A map of the US, showing car pool requests:

Something that ends up looking like this (Ben Fry’s zipdecode map of zip codes is a nice template)

And it’d be able to zoom:

And it will look something, really roughly, like Linked In’s InMap to visualize your links as a network diagram. (In other words, it will have two dots, and a line connecting them. For starters…

For starters, I’d like to create a wiki, for something, maybe carpool requests, that takes people’s current address and adds it correctly to a google map or some other map interface, and shows it nicely :

Robot dances to Jane Fonda

Andi, Dave and I pulled together this animation for our Comm lab animation project. It needs some fixing. We found Jane Fonda’s video and fell in love. I guess we decided to go ridiculous – why would a robot need exercise, after all?

DABA girls

D.A.B.A is the short film that I made with 4 others. It portrays the ongoing story of Dating a Banker, Anonymous, which was covered in the NY Times, and became a large internet meme (mostly in the blogosphere as women collaborated with others for support). The DABA phenomenon was about women who were dating bankers during the 2007 Wall Street collapse, and had to learn how to live with their newly-not-as-well-off-anymore boyfriends lost money. We filmed this at my mother’s psychoanalysis office, at McDonald’s, on Wall Street, and other various locations. We spent a day at David’s friend’s house editing it.

Byte Light

Jack and I collaborated to make ByteLight, which is an interactive light fixture that represents a giant LCD pixel. People can manipulate R, G, and B values of the pixel by flipping on and off 24 switches, which represent individual bits.

In the video above, someone flips the switches on and off, and watches the ambient light change color. The user can look up at the light fixture and see what happens to individual R, G, and B values when different combinations of switches are enacted, and the projected light color from the mixed R, G and B lights shows the color output of the pixel. It is possible to play and interact in a fun and compelling way, and also to experiment with bit data to gain a more comprehensive understanding.

Here are some images from our process:

see here for more

ByteLight update

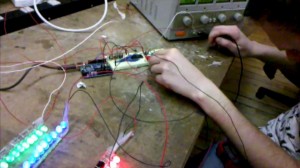

We finished our R, G, and B strips (each with about 45 LED’s), and we hooked them up to the bench power supply. We are feeding voltage through the breadboard, then through both the Arduino V-in, and through two adjustable voltage regulators (LM317) – then through three transistors. One goes to green and blue LED strips, which each ideally require about 3-3.5 V, and one goes to the red LED strip, which requires about 2.5 V.

Here we are testing the lights. It took us a little while to figure out how to get the brightness of each color to be more calibrated – since the red ones require less voltage, we realized we’d need an extra voltage regulator.

We got white glossy plexiglass which we will sand to look matte. With about 4’x4′ we should be set, although we have much extra. We will use AMS to cut out the parts for the light fixture (a box roughly 20″x20″x4″) and a box to contain all the switches, the arduino, the wiring, and the breadboards (roughly 35.5″x4″x4.625). I made the templates using Illustrator.

269 Canal street is a decent place to get parts. We got a power adapter that goes up to 5 Amps, so we should have enough with that to get the desired voltage and amperage for the LED’s.

Crepuscule

This shows a video with the effect I want using the mouse – as the mouse scrolls from left to right, the lion walks backwards away from you slowly, until he stops altogether. Then he begins to walk towards you until it gets too blurry to see.

This is a video of images of my mouse and rabbit. The mouse scrolling across the screen achieves the desired effect.

This video shows the circuit for the ultrasonic range finder. At first I was using face detection to determine the distance of the viewer but I decided to use an ultrasonic range finder instead. I think it is a little more accurate at far distances, and sometimes face detection wouldn’t detect me if I had glasses on.

Below, I show the code that I use

(more…)

Big Pixel – process

see here for older Big Pixel progress links.

Jack and I have continued work with our light fixture, aka Byte Light.

We have soldered 50 red, 50 blue, and 50 green 10 mm superbright LED lights each to three plexiglass rods, which will each represent one of the R, G, and B lines in our LCD pixel representation. Each LED needs 20 mA, and between 2-4 V, depending on the color. Therefore, we need an external power supply that goes up to around 3 Amps (when every switch is on). We will encompass each colored LED rod with frosted paper, and encase the three in a square plexiglass box that will shine light downwards on the user, who will be manipulating the 24 switches on the wall.

Our circuit consists of two multiplexors as input from the 24 light switches. Then the arduino takes the binary input and translates it into byte values (0-255) for R, G and B values, and using three transistors, we will alter the brightness of each R, G, and B light rod.